What healthcare startups need to know before building or deploying AI in the AI Act era

Artificial intelligence is rapidly transforming the healthcare sector. From clinical decision-support systems and medical imaging tools to digital triage platforms and predictive analytics for hospital operations, AI-driven technologies are increasingly central to health innovation.

For healthcare startups developing these tools, the regulatory landscape in Europe has changed significantly with the adoption of the EU Artificial Intelligence Act (AI Act). The regulation establishes the first comprehensive framework governing artificial intelligence across the European Union. Its objective is to ensure that AI systems placed on the EU market are safe, transparent, and respectful of fundamental rights.

For founders building AI-based health technologies, compliance with the AI Act is no longer a distant consideration. It is becoming a core element of product development, regulatory strategy, and investor due diligence. Because many healthcare AI applications fall into the high-risk category under the AI Act, startups in this sector will face stricter regulatory obligations than companies using AI in lower-impact contexts.

Understanding these obligations early can significantly influence product design, clinical validation strategies, and the ability to scale within the European market.

Why the AI Act matters particularly for healthcare startups

Healthcare is one of the sectors most directly affected by the AI Act. Many AI systems used in medical contexts are considered high-risk AI systems, especially when they influence diagnosis, treatment decisions, patient triage, or access to healthcare services.

Examples of healthcare AI solutions that may fall within the high-risk category include:

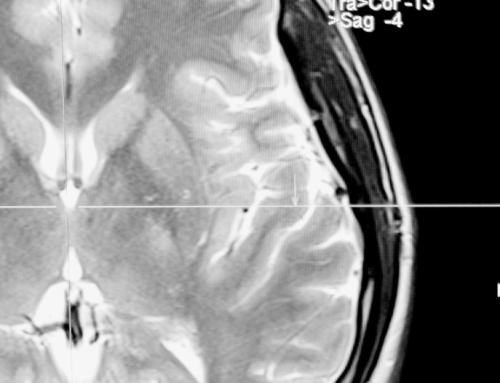

- AI tools that analyse medical imaging to support diagnosis (radiology, pathology, dermatology)

- clinical decision-support systems used by healthcare professionals

- predictive tools used to estimate disease risk or treatment outcomes

- AI used in hospital triage or patient prioritisation

- AI-driven systems used in medical devices or digital therapeutics

- algorithms that help determine eligibility for certain treatments or healthcare services

In many cases, these systems will already be regulated under the EU Medical Devices Regulation (MDR). The AI Act adds an additional layer of requirements that specifically address algorithmic risk management, transparency, and governance.

For healthcare startups, this means that regulatory strategy must now consider both medical device regulation and AI governance requirements.

Does the AI Act apply to your healthtech product?

The AI Act applies to companies that develop, place on the market, or deploy AI systems within the EU. It also applies to providers outside the EU if their AI systems are used within the EU healthcare ecosystem.

Healthcare startups may fall into different roles under the regulation:

- AI provider – the company developing and placing an AI system on the market

- AI deployer – hospitals, clinics, or healthcare providers using the system

- distributor or importer – entities making the system available in the EU market

Most healthcare startups developing AI-powered tools will qualify as providers, which carries the most significant regulatory obligations.

Even startups that integrate third-party AI models into their platforms may still be responsible for ensuring that the resulting system complies with the AI Act.

High-risk AI systems in healthcare

Under the AI Act, many healthcare AI applications qualify as high-risk systems. High-risk AI systems are subject to strict requirements before they can be placed on the EU market.

These obligations include:

Risk management systems

Startups must implement a continuous risk management framework throughout the lifecycle of the AI system. This includes identifying potential harms to patients or users and implementing safeguards to mitigate those risks.

Data governance and dataset quality

Healthcare AI relies heavily on training data. Under the AI Act, developers must ensure that datasets used to train and validate AI systems are:

- relevant and representative

- sufficiently complete

- free from systematic bias that could affect outcomes

- appropriately documented

For example, a diagnostic AI trained only on data from a limited demographic population could produce biased results when used in broader clinical settings.

Under the EU AI Act, technical documentation is one of the core compliance obligations for providers of high-risk AI systems. The purpose of this documentation is to allow regulators, notified bodies, and market surveillance authorities to evaluate whether the AI system complies with the legal requirements before and after it is placed on the market. For startups, this means that documentation cannot be treated as an afterthought. It must be developed alongside the system throughout the entire product lifecycle.

Below are the key components of technical documentation and what providers of high-risk AI systems are expected to implement in practice.

System architecture and design documentation

Providers must clearly document the structure and functioning of the AI system. This includes a detailed description of how the system operates, the technologies used, and how different components interact.

In practice, this documentation should include:

- a description of the AI model or models used, including model type (for example neural networks, gradient boosting, or large language models)

- the overall system architecture, including data pipelines, training environments, and deployment infrastructure

- the interaction between the AI component and other software modules

- integration with external systems, APIs, or third-party AI services

- version control and system update mechanisms

For healthcare or other regulated sectors, this section should also explain how the AI system integrates into the clinical or operational workflow and what role the algorithm plays in decision-making.

The goal is to allow regulators to understand how the system functions and where risks could arise.

Training data sources and data governance

Data governance is a critical requirement under the AI Act. Providers must document how datasets used to train, validate, and test the AI system were obtained and processed.

This includes:

- the origin of training datasets (clinical databases, publicly available datasets, proprietary datasets, etc.)

- data collection methods and selection criteria

- preprocessing steps such as data cleaning, normalization, and annotation

- methods used to ensure data quality, representativeness, and completeness

- measures taken to detect and mitigate bias in the datasets

- procedures used to protect personal data where applicable

For healthcare AI systems, this documentation should also clarify whether datasets contain personal data or health data and how this complies with GDPR requirements.

Startups must also be able to demonstrate that datasets are appropriate for the intended purpose of the AI system. For example, an AI tool intended to support dermatological diagnosis should be trained on data representing diverse patient populations to avoid discriminatory outcomes.

Validation, testing, and performance evaluation

Providers must document how the AI system was evaluated before deployment and how its performance was measured.

Technical documentation should include:

- the validation and testing methodologies used during development

- performance benchmarks and evaluation metrics

- testing conditions and datasets

- stress testing or robustness testing

- comparison with alternative methods or baseline models where relevant

For healthcare applications, this may also involve:

- clinical validation studies

- retrospective or prospective testing using medical datasets

- comparison with clinician decision-making

The documentation should clearly explain how the system performs under different conditions and whether there are known limitations in accuracy or reliability.

Performance metrics and monitoring mechanisms

The AI Act requires providers to document the performance characteristics of the AI system and ensure that it meets appropriate levels of accuracy, robustness, and cybersecurity.

This typically includes:

- key performance indicators used to evaluate the system

- acceptable error rates and performance thresholds

- system robustness under different operational conditions

- mechanisms for detecting performance degradation over time

In addition, providers must describe post-market monitoring mechanisms. This means startups must establish processes to track how the system performs once deployed in real-world environments.

For example, providers may need procedures for:

- monitoring model drift

- identifying unexpected system behaviour

- collecting feedback from users or deployers

- updating models when performance changes

Limitations, risks, and intended use

Technical documentation must clearly describe the intended purpose of the AI system and the contexts in which it should or should not be used.

This includes:

- the specific tasks the AI system is designed to perform

- the categories of users (for example clinicians, financial institutions, HR professionals)

- operational environments where the system is intended to operate

- known technical limitations

Providers must also identify foreseeable risks, such as incorrect predictions, biased outcomes, or system failures. Where such risks exist, the documentation must describe mitigation measures.

In healthcare AI, this could include situations where the system may produce unreliable results for certain patient groups or under specific clinical conditions.

Human oversight mechanisms

The AI Act requires high-risk systems to enable effective human oversight. Providers must therefore document how humans interact with the AI system and how they can supervise or override its outputs.

Documentation should explain:

- what role human operators play in reviewing or validating outputs

- how users can interpret AI-generated results

- mechanisms for overriding or correcting system decisions

- training or instructions provided to users

For healthcare startups, this often means demonstrating that the AI system functions as decision support rather than autonomous decision-making.

Record-keeping and traceability

Providers must maintain logs that allow the system’s operation to be traced. Technical documentation should explain what data is logged, how long logs are retained, and how they can be used to investigate incidents or regulatory inquiries.

Traceability is particularly important for systems that influence important decisions affecting individuals, such as medical diagnoses or credit assessments.

Integrating documentation into the development process

For startups, the most practical approach is to integrate technical documentation directly into the AI development lifecycle.

This often involves:

- documenting datasets and models during development

- maintaining version control for models and training data

- implementing internal compliance checklists

- establishing clear responsibilities for AI governance within the organisation

Building documentation in parallel with development is far more efficient than attempting to reconstruct it later during regulatory review.

In the context of the EU AI Act, technical documentation is not simply a formal requirement. It forms the foundation for demonstrating that an AI system has been designed, tested, and deployed responsibly. For startups developing high-risk AI systems, particularly in sectors such as healthcare, fintech, or employment technologies, establishing robust documentation practices early can significantly reduce regulatory risk and support long-term scalability.

Transparency obligations for healthcare AI

Even where an AI system does not qualify as high-risk under the AI Act, providers and deployers must still comply with certain transparency obligations. These requirements aim to ensure that users understand when artificial intelligence plays a role in generating information, recommendations, or decisions.

Under the regulation, individuals must be informed when:

- they are interacting with an AI system rather than a human

- content, images, or recommendations have been generated or significantly modified by AI

- automated systems influence decisions that may affect them

In healthcare settings, these obligations can arise in several practical scenarios. For example, digital health platforms may use AI chatbots to provide preliminary medical guidance, symptom checkers may generate automated recommendations, or patient portals may include AI-generated summaries of clinical information. In such cases, patients and healthcare professionals should be clearly informed that the output has been produced with the assistance of artificial intelligence.

Transparency also extends to healthcare professionals using AI-based tools. Doctors and clinicians should be able to understand when an output or recommendation is generated by an algorithm and how it should be interpreted within the clinical decision-making process. While the AI Act does not require full disclosure of proprietary algorithms, it does expect that the role of AI in generating outcomes is communicated in a clear and understandable manner.

In healthcare environments, transparency is closely linked to patient trust, accountability, and informed decision-making. As AI becomes more integrated into clinical workflows, clearly communicating its role will become both a regulatory requirement and an ethical expectation within digital health innovation.

The intersection of the AI Act, MDR, and GDPR

Healthcare startups rarely operate under a single regulatory framework. In practice, AI-based health technologies are typically subject to multiple overlapping legal regimes, each addressing different aspects of safety, governance, and data protection.

Most AI-driven healthcare products will need to consider compliance with three key regulatory frameworks:

- EU AI Act – establishes governance, safety, transparency, and risk management requirements for AI systems

- Medical Devices Regulation (MDR) – regulates medical devices, including software used for medical purposes

- GDPR – governs the processing of personal data, including sensitive health data used for training or operating AI systems

These frameworks apply simultaneously rather than as alternatives. A healthcare startup developing an AI-driven product may therefore need to meet obligations under all three.

For example:

- Training machine learning models using patient data or medical records will trigger GDPR requirements related to lawful processing, data minimisation, and security safeguards.

- Deploying AI within a diagnostic or clinical decision-support tool may qualify the software as a medical device, requiring conformity assessment and certification under the MDR.

- The algorithm itself, particularly if it influences medical decisions or access to care, may fall within the high-risk AI category under the AI Act and therefore require additional governance and documentation measures.

Because these frameworks intersect, regulatory compliance cannot be treated as a separate step at the end of development. Instead, healthcare startups benefit from integrating legal and regulatory considerations early in the product design and development process, ensuring that data practices, clinical validation, and AI governance structures align with all applicable requirements.

Why early compliance planning matters

Many startups treat regulatory compliance as something to address later in the product lifecycle. In the context of healthcare AI, this approach can create significant challenges.

Retrofitting compliance into an already deployed system may require:

- redesigning data pipelines

- rebuilding technical documentation

- modifying or replacing training datasets

- implementing additional explainability or human oversight mechanisms

These changes can be costly, technically complex, and may significantly delay market entry. In regulated sectors such as healthcare, where AI systems may also require medical device certification or clinical validation, redesigning systems after development can create additional regulatory and operational burdens.

Early regulatory assessment allows startups to design compliant AI systems from the outset. By integrating governance, data management, documentation, and risk controls into the development process, startups can reduce long-term regulatory exposure and streamline approval or conformity assessment procedures.

Timing is also an important factor. The EU AI Act entered into force on 1 August 2024, and its provisions will apply progressively over the coming years. Several key obligations will start applying from 2 August 2026, including many of the requirements relevant to high-risk AI systems. Although this may appear distant for early-stage companies, building compliant systems, documentation processes, and governance structures often takes considerable time.

For healthcare startups currently developing AI-based products, the most practical approach is therefore to begin assessing regulatory exposure now. Incorporating AI Act considerations early can help avoid costly redesigns later and ensure that products are ready for deployment as the regulatory deadlines approach.

AI governance as part of responsible health innovation

For healthcare startups, compliance with the AI Act should not be viewed solely as a legal obligation. It is increasingly part of building credibility and trust within a highly regulated and safety-critical sector.

Healthcare providers, regulators, and patients must be able to rely on the technologies they adopt. As AI becomes more embedded in clinical decision-making, expectations around transparency, accountability, and reliability are growing. Demonstrating that an AI system has been designed and tested within a clear governance framework is therefore becoming an important indicator of maturity for digital health companies.

Investors and strategic partners are also paying closer attention to regulatory exposure in AI-driven healthcare solutions. During due diligence processes, startups may be asked to demonstrate that their systems are:

- transparent in how AI contributes to outputs or recommendations

- supported by clear technical documentation and traceability

- designed with appropriate safeguards for patient safety

- aligned with applicable European regulatory standards

Startups that embed AI governance into their development processes are generally better positioned to build partnerships with hospitals, secure regulatory approvals, and scale within the European healthcare ecosystem.

Preparing your healthcare startup for the AI Act

As the AI Act gradually begins to apply across the European Union, healthcare startups should consider taking several practical steps to assess and manage their regulatory exposure.

Key actions may include:

- conducting an early AI risk classification assessment to determine how the system may be categorized under the AI Act

- evaluating whether the system could qualify as a high-risk AI system

- establishing internal AI governance and documentation processes during development

- ensuring that datasets used for training and validation meet regulatory expectations regarding quality, representativeness, and bias mitigation

- aligning AI development practices with Medical Devices Regulation (MDR) requirements where the system qualifies as medical software

- implementing data protection safeguards in line with GDPR, particularly when processing patient data

- planning ahead for conformity assessments, regulatory review, and post-market monitoring obligations

Taking these steps early can help startups reduce regulatory uncertainty and avoid costly redesigns later in the product lifecycle. At the same time, demonstrating a structured approach to AI governance can strengthen the credibility of healthcare AI solutions in a market where safety, reliability, and regulatory compliance are essential.